From sketch to video and playable 3D

By Oleg Sidorkin, CTO of Cinevva

I've spent years thinking about the gap between having a character in your head and having that character running around in a playable game. It used to be a chasm. You needed a concept artist, a 3D modeler, a rigger, an animator, and someone who could wire it all together in an engine. Or you needed to be all of those people yourself.

We just did the whole thing with AI tools, a rough sketch, and a lot of stubbornness. I want to show you exactly how, because I think this changes who gets to make games.

The character is Kali the Kitty from Cubcoats, a children's brand that sold over a million stuffed-animal hoodies through Nordstrom, Amazon, and Disney Store. Cubcoats has eight original characters with defined personalities, a fictional island world, and 14 patents. They're relaunching in 2026 as a licensing-first platform, and we wanted to show what that IP looks like when it moves beyond physical products: a vertical video short and a rigged 3D character you can walk around in a browser. Everything below is real output from that exploration.

Drag the 3D viewers to orbit. Scroll the film strip to see how the vertical short moves from first frame to last.

From flat book art to a character you can spin around

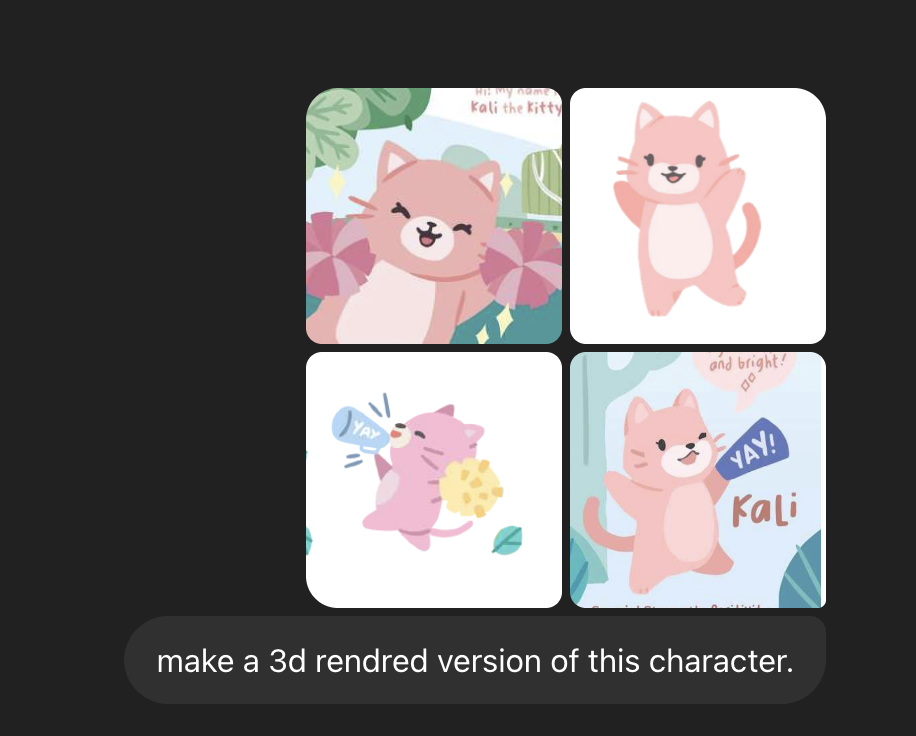

Cubcoats already had beautiful 2D illustrations. Mimi Chao's art direction gave every character a warm, rounded, hand-drawn look that works perfectly on hoodies and in storybooks. But flat art doesn't feed a 3D pipeline. We needed a high-fidelity character that could hold up as a reference for video generation, mesh creation, and rigging, all from the same face.

We started by giving an image model the original book illustrations and asking it to generate a 3D rendered version of the same character.

The proportions held, the personality came through, and the soft 3D cartoon look gave every downstream tool something consistent to agree on. From there we iterated on the exact pose and framing until we had a locked hero reference.

The T-pose matters because rigging expects arms away from the torso. Skip it and the auto-rigger fuses the arms to the body and gives up. We generated the T-pose from the same locked character look so the silhouette and proportions stayed consistent across every step.

Write the story before you generate anything

Before touching video tools, we wrote a beat sheet in plain language. Kali's personality trait in the Cubcoats universe is "Positive." She's the one who makes everyone feel included, stays upbeat when things get hard. So we built a ten-second arc around that:

Kali walks into a dark misty forest at night holding a tiny glowing lantern. She gets scared, sits down alone, almost gives up. Then she discovers a magical golden tree lighting up behind her. Wonder, joy, golden particles raining down. Fear to hope in one breath.

That's the whole plot. It doesn't need to be complex. It needs to fit one emotional turn into one continuous shot so the start frame and end frame can actually connect.

Two frames that bookend the whole thing

We generated two key stills from that plot using an image model (ChatGPT). Portrait aspect to match the vertical short. The prompts describe the exact same character in two different moments.

Start frame prompt:

3D animated Pixar-style render of Kali, a small pink kitty character with large round dark eyes, pink pointed ears, a light pink belly, and a cheerful round face. She is standing at the edge of a dark misty forest at night, holding a tiny glowing lantern in both paws. Her ears are slightly flattened and her expression is nervous but determined. Cinematic lighting with cool blue moonlight from above and warm orange glow from the lantern. Dense fog between dark tree trunks in the background. Camera angle: medium shot, slightly low looking up at her. No text, no watermark.

End frame prompt:

3D animated Pixar-style render of Kali, a small pink kitty character with large round dark eyes, pink pointed ears, and a light pink belly. She is standing in front of a massive magical tree covered in glowing golden flowers, arms outstretched wide, beaming with a huge joyful smile. Golden petals float in the air around her. The tree radiates warm golden light that illuminates the entire forest clearing. Starry night sky visible above. Camera angle: wide shot from slightly below, epic reveal composition. No text, no watermark.

Seedance interpolates between these two bookends. The character description stays identical across both prompts so the model knows it's the same person. Only the scene, emotion, and camera change.

The video came out better than expected

We used Seedance 1.5 Pro with start and end frames plus a single prompt. The clip interpolates between the two bookends. Native audio was generated in the same pass. Here are frames sampled along the timeline.

Left to right: progression through the generated vertical short.

Start plus end frame was the right mode when we cared about hitting an exact closing pose. For longer emotional arcs, single reference plus prompt worked well on Kling 3.0 Pro with storyboard-style multi-shot. Different tools for different jobs.

Same character, now in 3D

Here's where it gets interesting. The T-pose still from the same family as the video references went to Hunyuan3D (via fal.ai) for image-to-3D generation. You're not matching the game mesh to the video pixel for pixel. You're matching player memory. The character in the game should feel like the same personality as in the reel. And because we locked one visual identity at the start, it did.

Hunyuan3D outputs a textured GLB. Not game-ready, but recognizably Kali. The silhouette matched. The materials were close. Good enough to move forward.

Drag these around

Raw Hunyuan3D output from the T-pose image. No rig, just a textured mesh. Below, the same character after Tripo auto-rig with a baked walk animation.

Getting animations to actually work

This was the hardest part and the part where we failed the most.

Tripo rigged the model with a Mixamo-compatible skeleton, which sounded perfect. Mixamo has hundreds of animations. Upload the model, browse the library, download clips. Clean and simple.

Except Mixamo rejected our rigged GLB. "Unable to map your existing skeleton." We tried different export settings. Same error.

The fix was Blender. Import the GLB, apply the armature pose as the rest pose, export as FBX. That FBX uploaded to Mixamo without complaint. From there we could browse the full animation library: walk cycles, idle breathing, jumps, everything a game character needs.

Tripo's own preset animations (baked as separate GLBs) sometimes carried only root motion in the animation data. For blended gameplay animation with crossfading between states, Mixamo clips on the Mixamo-named rig were the reliable path.

Playing the character in a browser

We built a small Three.js test scene. Third-person camera, WASD movement, collectibles scattered around, orbit camera when idle, even sliders for adjusting arm spread and head tilt at runtime. Getting the animation blending right took iteration. Normalize height, fix the forward axis in code, wire up an AnimationMixer, crossfade between idle and walk, apply optional bone tweaks on shoulders and head. Third-person camera behind the character closes the loop from sketch to something you can walk around.

Try it yourself. WASD to move, Shift to run, Space to jump.

What I'd tell someone doing this tomorrow

Lock one character look before you touch video or 3D tools. Every minute you spend getting that reference right saves an hour of chasing consistency later.

Write a tiny plot first. Then make your start and end frames. Keep reference images within API size limits. Use the T-pose from the same design system for the game mesh.

If you need Mixamo animations on a Tripo rig, go through Blender for the FBX conversion. That step took us the longest to figure out and it's the one that actually unblocked everything.

Two years ago, this pipeline didn't exist. You'd need a team and a budget. Now you need a sketch, some taste, and enough patience to wrestle with file format conversions. I think that's a meaningful change in who gets to bring a character to life.

Tools used: image generation models, Seedance 1.5 Pro, Kling 3.0 Pro, Hunyuan3D (fal.ai), Tripo (auto-rig), Blender (GLB to FBX), Mixamo (animations), Three.js (browser game). Reflects early 2026. CDN links may rotate; download GLBs if you need long-lived offline copies.